In an article published in the journal Results of Engineering, researchers investigated reinforcement learning’s (RL) applications in guidance, navigation, and control (GNC) systems of fixed-wing unmanned aerial vehicles (UAVs). They focused on enhancing motion planning for dynamic target interception and waypoint tracking. The findings demonstrated how RL can improve the robustness, efficiency, and adaptability of aerial vehicle control systems, advancing autonomous and intelligent flight operations.

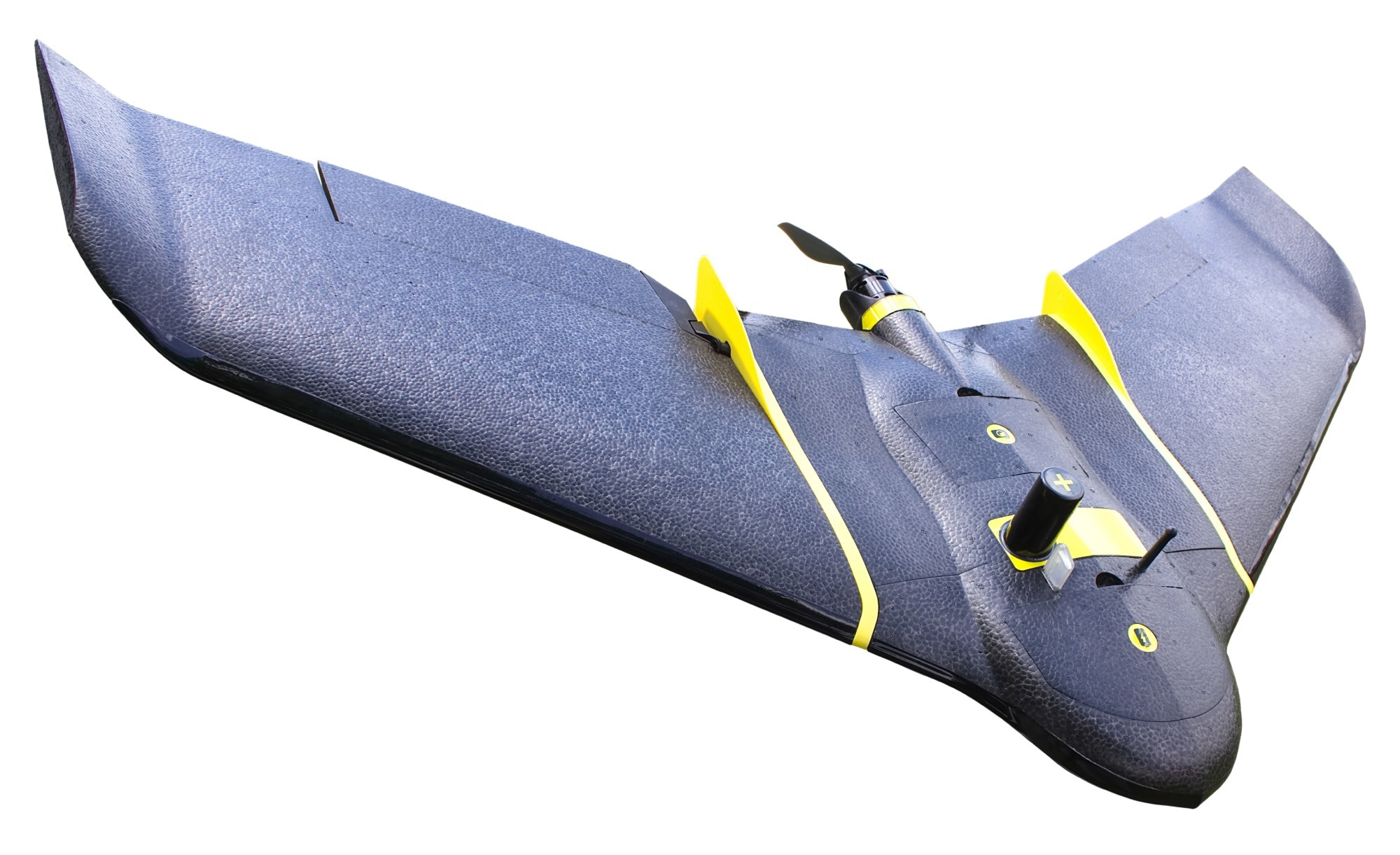

Study: Control and Motion Planning of Fixed-wing UAVs Through Reinforcement Learning. Image Credit: Adzem/Shutterstock

Study: Control and Motion Planning of Fixed-wing UAVs Through Reinforcement Learning. Image Credit: Adzem/Shutterstock

Background

The integration of autonomous systems into everyday life is rapidly advancing, with significant implications for both immediate and long-term applications. Autonomous vehicles, particularly in urban environments such as taxis, exemplify the growing capability of machines to make independent or semi-independent decisions. Central to this evolution is artificial intelligence (AI), and more specifically, machine learning techniques that enable these systems to operate effectively.

RL has emerged as a powerful AI method for control and decision-making tasks. Notable achievements include RL systems surpassing world champions in intricate games like Go and chess, allowing robots to play soccer autonomously, and optimizing energy production in wind turbines. These successes highlight RL's transition from purely academic research to practical, real-world applications. In the domain of aerial vehicles, RL offers promising opportunities for advancements in autonomous navigation, robust control, and exploration, applicable to both rotary-wing and fixed-wing UAVs. These applications have the potential to enhance or even surpass the capabilities of traditional GNC systems.

Traditional flight control methods are often hampered by their intricate design requirements, necessitating extensive expertise from control engineers and relying heavily on historical experience within specific disciplines. Such methods can be cumbersome to develop and may lack adaptability across different scenarios.

This paper aimed to address these limitations by exploring the application of RL in GNC systems for aerial vehicles. Specifically, it focused on two key applications: dynamic target interception and dynamic target interception and waypoint tracking. By doing so, this research sought to simplify the design process and enhance the robustness, adaptability, and generalization of aerial vehicle control systems. Through various test cases and methodologies, the paper evaluated the potential of RL to overcome the challenges faced by conventional GNC approaches, paving the way for more autonomous and intelligent flight operations.

Waypoint Tracking

RL offered promising applications for aerial vehicle control, particularly in motion planning for autonomous fixed-wing aerial vehicles. Traditionally, RL algorithms have focused on low-level tasks like attitude control or set-point tracking. This research shifted towards high-level tasks, simplifying complex designs like waypoint tracking systems. RL could learn optimal trajectories and actions from the aircraft's state to a target waypoint, mapping high-level goals to low-level commands directly.

Model-free RL algorithms like soft actor-critic (SAC) and proximal policy optimization (PPO) were effective baselines for these tasks. The RL framework used an actor-critic structure where the environment comprised the agent's aircraft dynamics and the target waypoint dynamics. The critic network evaluated state-action pairs, while the actor-network determined the correct command actions based on the critic's quality (Q)-values.

This method enhanced the efficiency and effectiveness of waypoint tracking by reducing reliance on intricate traditional designs. The researchers illustrated this concept, showing the Markov decision process (MDP) for tasks like waypoint tracking and dynamic target interception. Overall, RL provided a streamlined, intelligent approach to aerial vehicle control, offering robustness and adaptability in complex environments.

Dynamic Target Interception

Another key application of RL in aerial vehicle control was dynamic target interception. Traditional GNC systems for intercepting moving targets are intricate and time-consuming to design. RL could streamline this process, enabling UAVs to autonomously learn interception capabilities with minimal human intervention. Previous research often focused on multi-agent setups or single setups with fixed trajectory evaders. This work proposed an approach where the pursuer learned to catch an evader exhibiting various escape behaviors, enhancing generalization to different strategies.

The RL framework used a model-free actor-critic architecture, with the reward based on the dynamics of the agent and the relative position of the evader, aiming for maximum reward upon successful interception. Complex tasks like autonomous navigation in hazardous environments and collision avoidance require robust RL systems.

Enhancing robustness involved addressing external and internal perturbations through methodologies like domain randomization and adversarial RL, which helped mitigate the simulation-to-real gap. Sensor noise, wind gusts, and aerodynamic factors were test cases for assessing system robustness. Additionally, multi-task RL and curriculum learning could equip agents with the capabilities to handle multiple tasks with a unified policy. Further research in this area is essential for developing robust, adaptable autonomous systems for complex real-world aerial GNC tasks.

Conclusion

In conclusion, the researchers explored RL in the GNC systems of fixed-wing UAVs, focusing on waypoint tracking and dynamic target interception. RL showed promise in simplifying complex designs and improving robustness, adaptability, and efficiency. By using model-free RL algorithms like SAC and PPO within an actor-critic framework, the authors demonstrated enhanced motion planning capabilities.

Additionally, RL facilitated dynamic target interception, generalizing to various evasion strategies. The research underscored RL's potential to surpass traditional GNC methods, paving the way for more autonomous, intelligent aerial vehicle control systems, and highlighted the need for further exploration in robust, adaptable RL applications.

Journal reference:

- Francisco Giral, Ignacio Gomez, Soledad Le Clainche, Control and motion planning of fixed-wing UAV through reinforcement learning, Results in Engineering, Volume 23, 2024, 102379, ISSN 2590-1230, https://doi.org/10.1016/j.rineng.2024.102379, https://www.sciencedirect.com/science/article/pii/S2590123024006340