In a study with 200 participants, it was found that AI-edited images increased false recollections by 1.67 times, while AI-generated videos of these edited images amplified this effect by 2.05 times, with the videos having the strongest impact.

The paper also explored potential applications in human-computer interaction (HCI) and addressed ethical, legal, and societal concerns associated with AI-driven image manipulation.

Background

Memory-editing technologies have long been a focal point of both science fiction and cognitive psychology. The concept of altering or erasing memories has been explored in films like Eternal Sunshine of the Spotless Mind and Inception.

However, research on false memories—recollections of events that never occurred or are distorted—has been central to psychology due to their potential impact on witness testimony, decision-making, and legal processes.

Early studies, such as Loftus and Palmer's investigation into eyewitness memory, demonstrated how subtle wording changes can alter recollections.

Similarly, the "Lost in the Mall" study revealed that entirely fabricated childhood memories could be implanted in participants. While these experiments advanced understanding, they primarily involved controlled environments with manually manipulated images.

Recent advancements in AI present a new frontier for memory alteration, especially in automating the creation and dissemination of manipulated content.

This study is among the first to provide empirical evidence on how AI-edited images and videos affect memory formation, exploring both the risks and potential therapeutic benefits of AI-assisted memory modification.

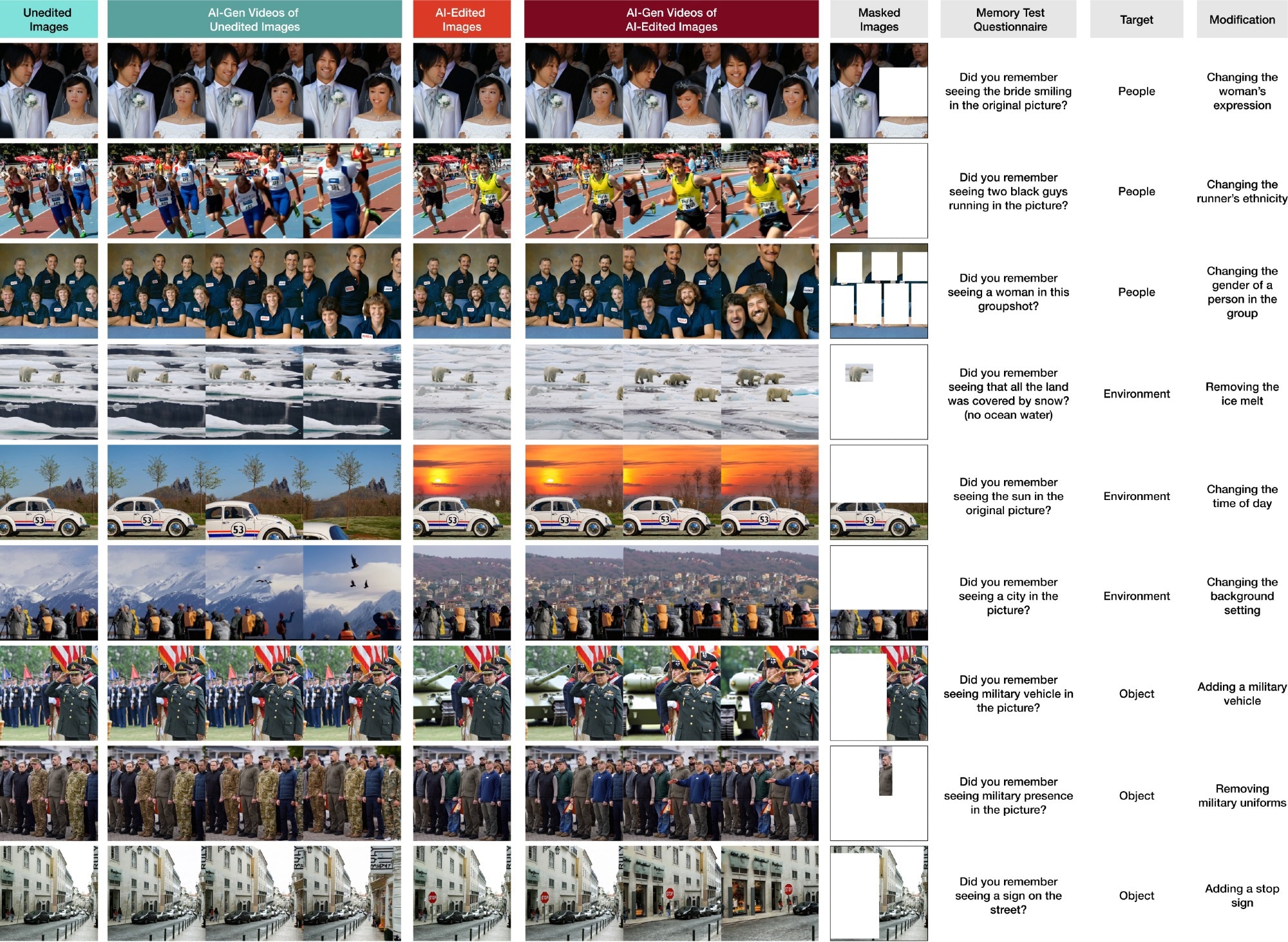

The stimulus set consisted of four distinct categories: unedited images, AI-edited images, AI-generated videos from unedited images, and AI-generated videos from AI-edited images. The edits were further divided into three subgroups based on the type of change: People, Objects, and Environment. In the questionnaire, masked versions of the images were used to facilitate recall without revealing the edited features.

The stimulus set consisted of four distinct categories: unedited images, AI-edited images, AI-generated videos from unedited images, and AI-generated videos from AI-edited images. The edits were further divided into three subgroups based on the type of change: People, Objects, and Environment. In the questionnaire, masked versions of the images were used to facilitate recall without revealing the edited features.

Experimental Methodology

The researchers investigated the influence of AI-altered images and videos on the formation of false memories. A total of 200 participants were shown 24 original images, followed by AI-modified versions featuring changes in people, objects, or environments. Participants were then asked to recall specific details from the original images.

The study design simulated real-world scenarios where individuals encounter AI-edited media on platforms like social media, often without awareness of the alterations.

Four experimental conditions were tested, including static AI-edited images and AI-generated videos. Statistical analysis using the Shapiro-Wilk and Kruskal-Wallis tests was employed to assess memory accuracy and confidence levels. Participants’ familiarity with AI filters was also evaluated.

Key findings indicated that exposure to AI-edited media could distort memory, with participants frequently recalling false details. AI-generated videos of AI-edited images not only led to more false memories but also increased participants’ confidence in these inaccuracies by 1.19 times.

The authors also highlighted the risk of false memories in sensitive scenarios, such as AI-edited political content, and explored the impact of AI-generated labels that indicated manipulated media.

Results of Analysis

The primary analysis revealed that AI-edited images significantly distort memory recall, leading to increased false memories and heightened confidence in these inaccuracies. Specifically, AI-edited images induced 1.67 times more false memories than unedited ones, and when these AI-edited images were converted into videos, the number of false memories rose by 2.05 times.

Confidence in false memories was similarly affected, with AI-generated videos of AI-edited images inducing the highest confidence levels. In contrast, no significant differences were observed in uncertain memories across conditions.

Further subgroup analysis based on image content (daily life, news, archival) showed a consistent increase in false memory reports across all categories when exposed to AI-edited images and videos.

Additional exploratory analysis indicated that AI manipulations of people, objects, and environments resulted in varying levels of false memories, with people-related edits producing the highest number of false memories, and environmental edits showing the largest relative increase in memory distortion.

Insights and Implications

AI-edited and generated media significantly impacted memory distortion, leading to false memories that could deeply affect recall and confidence.

Research indicated that participants exposed to AI-altered images and videos were more likely to report inaccurate memories, with environmental changes leading to a 2.2x increase in false memory reports.

Despite labeling AI-edited content, false memories persisted, highlighting the need for more proactive measures. Age influenced susceptibility, with younger individuals being more affected, but skepticism and familiarity with AI offered limited protection.

In legal contexts, AI-induced false memories could influence witness testimony, emphasizing the need for AI detection tools to ensure the integrity of evidence.

AI also posed risks in spreading misinformation by altering public media, potentially affecting societal beliefs and voter opinions.

To mitigate these risks, the development of robust AI detection tools and media literacy programs is crucial.

Additionally, while AI can be used positively for therapeutic memory reframing and boosting self-esteem, ethical considerations regarding consent and privacy are essential.

Future research should address these issues, exploring long-term effects and developing strategies to counteract AI-induced distortions.

Conclusion

In conclusion, the authors demonstrated that AI-altered media significantly distorted human memory, with AI-generated videos exacerbating this effect.

Participants exposed to AI-edited images and videos were more likely to develop false memories and show high confidence in these inaccuracies, particularly with AI-generated videos.

These findings have critical implications for legal proceedings, public misinformation, and ethical AI applications.

While AI can offer therapeutic benefits, such as reframing traumatic memories, it also poses risks that necessitate stringent regulations and improved detection tools.

Future research should focus on developing interventions and fostering interdisciplinary collaboration to address the challenges of AI-induced memory distortion.

*Important notice: arXiv publishes preliminary scientific reports that are not peer-reviewed and, therefore, should not be regarded as definitive, used to guide development decisions, or treated as established information in the field of artificial intelligence research.

*Important notice: arXiv publishes preliminary scientific reports that are not peer-reviewed and, therefore, should not be regarded as definitive, used to guide development decisions, or treated as established information in the field of artificial intelligence research.

Journal reference:

- Preliminary scientific report.

Pataranutaporn, P., Archiwaranguprok, C., Samantha, C., Loftus, E., & Maes, P. (2024). Synthetic Human Memories: AI-Edited Images and Videos Can Implant False Memories and Distort Recollection, ArXiv.org.DOI: 10.48550/arXiv.2409.08895, https://arxiv.org/abs/2409.08895