A new hybrid optical-digital AI system uses a massive fixed metasurface and a tiny trainable backend to match top digital models across vision tasks, while delivering striking speed gains in demanding applications such as cancer slide analysis.

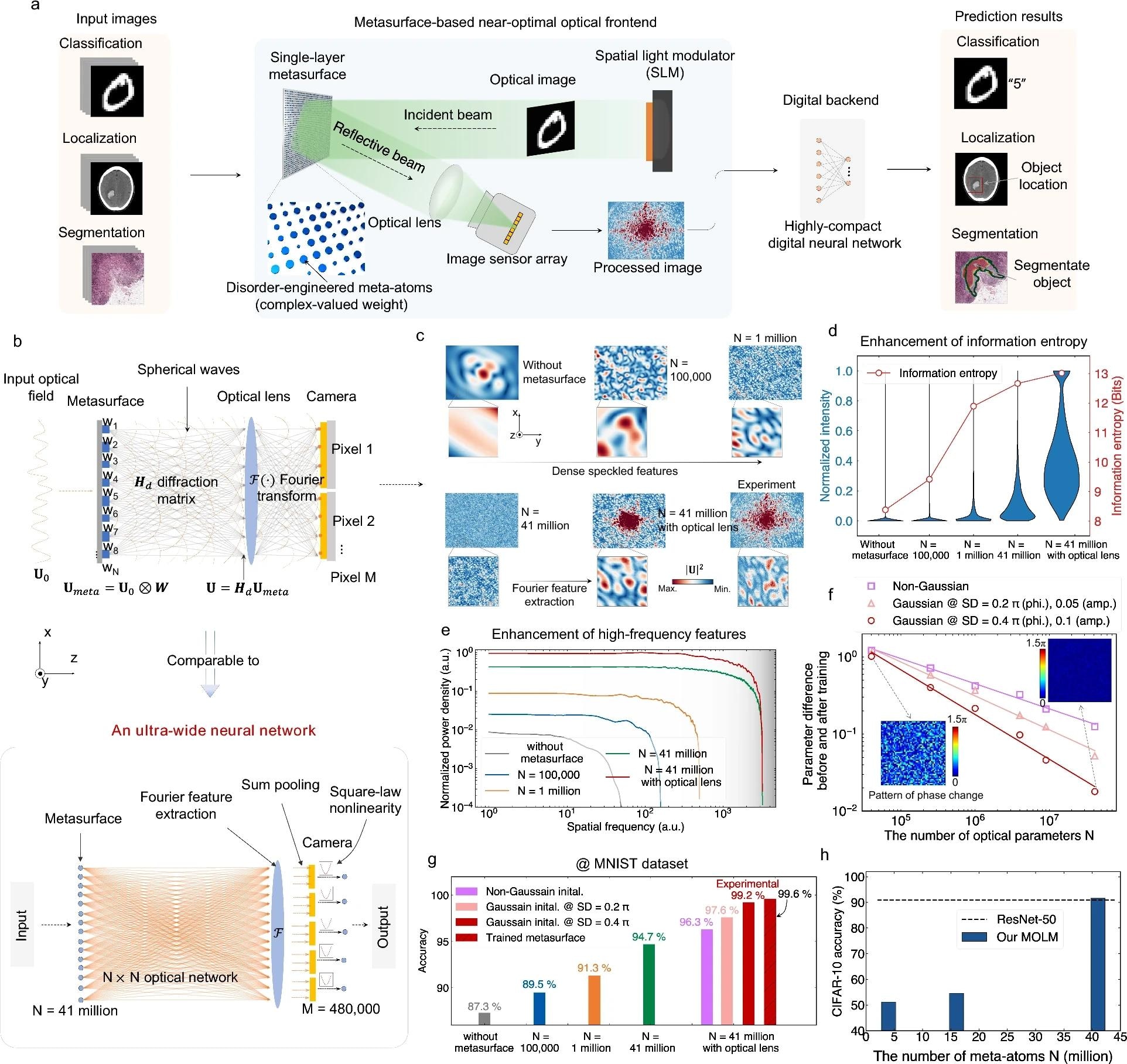

The metasurface-based optical learning machine (MOLM). a Schematic of the MOLM. The optical image generated by the SLM is projected onto a single-layer metasurface consisting of massive cylindrical silicon meta-atoms. An optical lens then collects the reflected optical image by the metasurface. Following that, the optical field at the focusing plane is captured by an image sensor array. Lastly, the captured image is fed into a digital neural network to produce the prediction result. b The illustration of the metasurface-based ultra-wide neural network. c The transformed images by the metasurface-based optical frontend versus the number of meta-atoms N. d The value’s distribution and information entropy of transformed images versus the number of meta-atoms N. e The normalized power density of the transformed image versus the spatial frequency for different numbers of meta-atoms N. f The parameter difference (RMSE) before and after training versus the number of optical parameters N. g The MNIST accuracies of the metasurface-based optical frontend with different configurations. h The experimental CIFAR-10 accuracy versus the number of meta-atoms

Neural networks (NNs) have emerged as a powerful paradigm across diverse scientific and technological domains, instigating transformative advancements in areas such as drug discovery, image processing, autonomous vehicles, and medical diagnostics. Compared with their digital counterparts, optical neural networks (ONNs) hold considerable promise owing to the high degree of parallelism offered by light. By harnessing this parallelism, ONNs can deliver significant advantages in terms of energy efficiency and operational speed. However, despite the theoretical potential of ONNs, their scalability to modern model sizes is constrained by practical bottlenecks: training large ONNs is computationally prohibitive, and implementing or tuning millions of optical components is highly susceptible to fabrication imperfections and alignment errors. Consequently, ONNs continue to exhibit a notable performance gap when benchmarked against state-of-the-art digital AI models.

In a recent paper published in eLight, a research team led by Professor Chaoran Huang from The Chinese University of Hong Kong has developed a metasurface-based optical learning machine (MOLM) to address the aforementioned challenges. They utilize a large-scale optical metasurface integrating 41 million parameters to construct an ultra-wide ONN. At this ultra-wide scale, a fixed, untrained metasurface enables the ONN to perform closely, approximating a fully trained one. Residual mismatch is compensated by a compact digital backend with only 102-104 trainable parameters, thereby facilitating task adaptation without the need for retraining or re-fabricating the metasurface. Experimentally, the resulting hybrid system demonstrates scalable machine vision across six distinct tasks, achieving accuracy competitive with state-of-the-art digital AI models, including ResNet and Vision Transformer. These tasks encompass a real-world application, breast cancer diagnosis based on billion-pixel-level pathological slide images. This approach is highly scalable, robust to fabrication errors, and capable of operating under both coherent and incoherent illumination, thus providing a practical pathway toward large-scale, high-performance optical AI computing.

By leveraging the ultra-wide ONN's novel computing architecture, the proposed MOLM not only effectively circumvents the challenges faced by traditional ONNs but also demonstrates outstanding performance and task versatility. The following sections will elaborate on the key experimental results:

Record-high accuracy on benchmark datasets

The research team experimentally validates the outstanding performance of the metasurface-based optical learning machine (MOLM) in image classification benchmark tasks. Using a compact digital backend with only 3,000 trainable weights, a fixed, untrained metasurface achieves classification accuracy of up to 99.2% on the MNIST dataset. This performance surpasses currently reported ONNs and closely approaches that of a fully trained end-to-end metasurface (99.6%). For the more challenging CIFAR-10 image dataset, an experimental accuracy of 91.6% is also attained, rivaling large-scale AI models such as ResNet-50, which contains tens of millions of parameters.

Scalable to diverse vision tasks

Using the same metasurface chip, the MOLM can adapt to six distinct vision tasks without requiring physical reconfiguration or optical parameter tuning. These tasks encompass not only simple MNIST and CIFAR-10 classification but also practical medical image analysis scenarios, including COVID-19 chest X-ray image classification, intracranial hemorrhage (ICH) detection, and disease classification on the NIH dataset. Furthermore, the system can be applied to video-based human action recognition tasks and to cancer detection applications involving whole-slide images at the pixel level. Across all these tasks, the system achieves performance comparable to large-scale digital AI models (e.g., ResNet, ViT, and SAM), while reducing the number of digital parameters by several orders of magnitude.

Scalable to complex neural network architecture

By leveraging the ultra-wide NN computing framework with random parameters, a metasurface-based recurrent neural network (meta-RNN) can be constructed by integrating the MOLM as the hidden layer of a temporal processing unit, thereby enabling the MOLM to process video frame sequences. The meta-RNN is applied to human action recognition tasks on the KTH dataset. Experimental results demonstrate that the meta-RNN achieves frame-level accuracy of 97.5% and action classification accuracy of 99.1% with only 9,180 digital weights. Capitalizing on the inherent low latency and parallel processing advantages of optical computing, the system achieves a processing rate of 1,968 frames per second in practical video inference applications.

Solving real-world challenges: cancer diagnosis based on multi-gigapixel images

The MOLM also offers unique advantages in computationally intensive real-world applications. The MOLM is employed to accelerate the segmentation and localization of tumor regions from a 10-billion-pixel whole slide image (WSI), which is extremely time-consuming in all-digital NNs. Experimental results indicate that the MOLM achieves an Intersection over Union (IoU) comparable to that of the cutting-edge segmentation AI model (SAM) with hundreds of millions of parameters. Notably, the MOLM achieves a significant breakthrough in inference time: complete inference on a single WSI requires only 1.02 seconds, whereas SAM necessitates 1.48 hours for the same task. This substantial efficiency advantage holds profound significance for practical clinical deployment: within a 12-hour working day, the MOLM can complete diagnostic analysis of over 42,000 patient samples, while SAM can process only 8 cases within the identical timeframe.

Robustness to fabrication errors and broadband incoherent light operation

The MOLM demonstrates two key advantages in terms of engineering implementation. Firstly, by adopting a computing architecture based on a random optical metasurface, the system inherently exhibits strong robustness against fabrication errors and misalignment errors. To demonstrate this, the research team experimentally characterizes metasurface chips fabricated in three distinct batches. Despite these chips exhibiting a fabrication error of approximately ±6%, the experimentally measured MNIST classification accuracy fluctuates below 1% across all tested devices.

Secondly, unlike conventional ONN systems, which typically rely on coherent laser sources, the MOLM can operate under broadband incoherent illumination. Experimental results demonstrate that across spectral bandwidths ranging from 20 to 100 nm, the system's classification accuracy remains above 98.3% on the MNIST dataset, with only ∼1% degradation. The above two advantages eliminate the need for expensive, complex laser systems and precision optical alignment setups, enabling the system to operate directly under natural ambient light and thereby substantially reducing both hardware costs and system complexity.

Source:

Journal reference: